Operations

How to Design Agent Workflows That Don't Need Babysitting

I used to call things automated if they worked once without me touching them.

That standard does not survive real life.

Because the real test of a workflow is not whether it passes one clean run when the builder is watching closely. The real test is whether it behaves well when life gets uneven:

- timing drifts

- one dependency fails

- a session resets

- a task only partly completes

- a human is too busy to inspect every detail

That is where weak workflow design reveals itself.

And in most cases, the workflow does not fail dramatically. It fails in a much more expensive way.

It almost works.

Why partial success is dangerous

The most fragile workflows do not usually crash in a way that forces immediate attention.

They produce partial success.

One step fails quietly. The rest of the chain keeps moving. A summary gets created from incomplete state. An update gets sent without the right grounding. The operator receives something that looks almost right, which is often more dangerous than a clean failure.

That “almost” creates two problems at once:

- it creates cleanup work

- and it creates false confidence

That combination is expensive because people do not just have to fix the workflow. They also have to re-evaluate whether the output can be trusted at all.

That is why workflows that “mostly work” are often worse than workflows that fail cleanly.

A clean no-op can be handled.

A polished half-truth is much harder to work with.

The standard that matters

A good workflow should not be judged by whether it feels clever during setup.

It should be judged by whether it remains dependable when nobody has time to babysit it emotionally.

That means the important questions are things like:

- does it stop safely when prerequisites are missing

- does it preserve the ability to recover

- does it surface failure clearly

- does it protect customer-facing output from internal mess

- can an operator understand what happened quickly

That is what trustworthy automation looks like.

Not “fully autonomous at all costs.”

Operationally sane under imperfect conditions.

The design rules that make workflows hold up

The workflows that hold up tend to follow a few rules.

1. Sequence by dependency, not convenience

Do not run steps in the order that looks tidy on a diagram. Run them in the order that preserves truth.

That means later steps should only happen after the layers they depend on are genuinely ready.

Examples:

- sync before summary

- validate before publish

- retrieval before response generation

- backup after stable state, not in the middle of a half-finished chain

A workflow should respect what downstream steps actually rely on.

Otherwise the system may look active while quietly producing corrupted continuity.

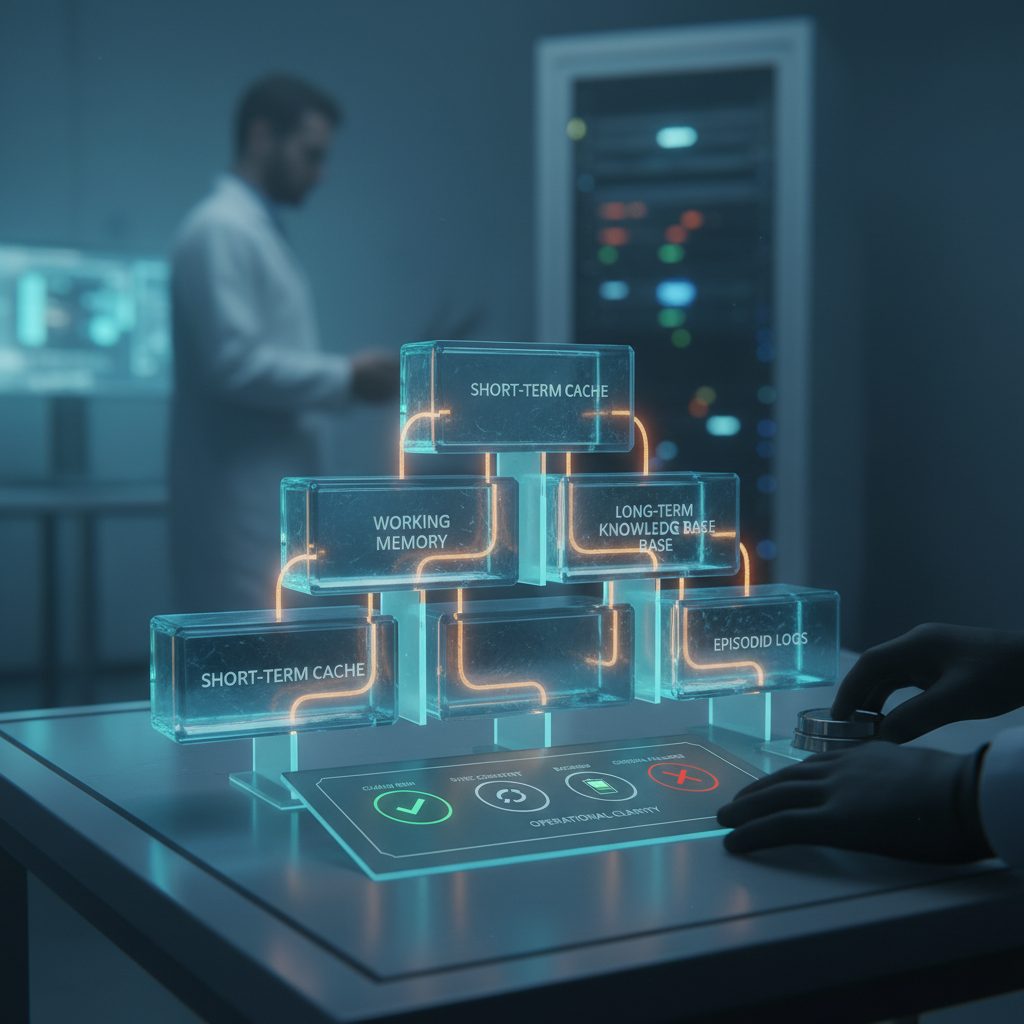

2. Give the operator one health surface

If reliability requires checking five different places, reliability will decay.

You need one brief, one view, or one compact surface that answers the real questions fast:

- did the chain run

- did sync complete

- is backup current

- did anything fail loudly enough to matter

That matters because humans do not maintain systems by virtue alone. They maintain them through low-friction visibility.

Operational clarity beats dashboard abundance.

3. Define failure behavior explicitly

A workflow should know what it does when prerequisites are missing.

Good workflow behavior looks like this:

- stop safely

- skip intentionally

- report clearly

- preserve the ability to recover

Bad workflow behavior looks like this:

- continue half-blind

- produce polished nonsense

- hide missing dependencies behind normal-looking output

- leave the operator guessing whether the run “kind of worked”

Safe no-op is better than corrupted continuity.

That is one of the biggest mental shifts in dependable automation.

4. Treat reset as part of design

If a workflow touches meaningful state, then reset is not an emergency concept. It is part of the design.

That means a production-worthy workflow should have:

- a backup-first reset path

- recovery notes

- clear handoff state

- a known-good restart story

If reset only exists in one builder's head, it does not meaningfully exist.

And the moment that builder is tired, interrupted, or unavailable, the workflow becomes much less real than it looked.

5. Separate internal operations from customer-visible output

A workflow may need lots of internal complexity to behave well.

That does not mean customer-facing output should expose that complexity.

The system should be able to:

- do ugly internal work

- keep diagnostics private

- preserve logs and repair notes internally

- send clean final output externally

This separation becomes more important as systems grow.

Without it, internal uncertainty starts leaking into the customer experience.

What “no babysitting” actually means

A workflow that does not need babysitting does not mean a workflow that is never checked.

It means a workflow designed so that routine use does not require constant emotional monitoring.

That is a big difference.

“No babysitting” means:

- failures are understandable

- recovery is documented

- output stays clean

- the human does not need to hover over every run waiting for ambiguity

That is a much better target than fantasizing about perfect autonomy.

Because in real business systems, autonomy without clarity is usually just faster chaos.

The 60-second test

A workflow starts feeling trustworthy when an operator can answer the most important questions in about a minute.

For example:

- Did the chain run?

- Did retrieval or sync complete?

- Did backup finish?

- Did anything drift or require repair?

If those answers are hard to get, the workflow still depends too much on attention.

And attention is exactly the thing automation is supposed to protect.

What makes a workflow actually usable

A workflow becomes usable when it can survive ordinary mess.

Not ideal conditions.

Ordinary mess.

That means it can deal with:

- delays

- partial dependency failure

- imperfect timing

- session churn

- operator interruption

- real-world ambiguity without becoming ambiguous itself

That is the bar worth building for.

Not the clean-room demo.

The tired-Tuesday reality test.

What to change if your workflow still needs babysitting

If one of your workflows still feels like something you have to “watch closely,” inspect the structure before you add more prompt logic. Tighten sequencing, define failure behavior, shrink the health surface, and document the reset path.

The goal is not to make the workflow look more advanced.

The goal is to make it dependable enough that it can keep doing useful work when you are too busy to hover over it.